Keep in mind M1 is also an iOS chip, which pushes background user apps down in priority, while macOS doesn’t. So on iOS, having more E cores means you can allow background work to continue without impacting the user experience due to the limited background API functionality iOS has and the ability to set the QoS on those things to background with high confidence that it is the right QoS. MacOS doesn’t follow that pattern, and apps that are active, but not in the foreground can run work at higher QoS levels that would get assigned to the P cores, causing more contention for those cores.

That said, since the E cores can be used for work assigned to the P cores when the P cores are full, then there’s no real harm in having a couple more than you need. And it allows the M1 to squeeze more work through and have better latencies under load than without them.

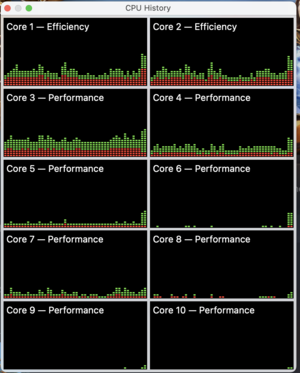

Now, when you have “enough” P cores, you aren’t going to spill as many threads onto the E cores. The M1 Pro/Max, with 2 performance core clusters, will favor the first core cluster, then the second, then the E cores when it comes to work assigned to P cores.

The specific measurements are something only Apple holds, but I suspect that Apple saw that by trying to keep the second cluster idle unless there’s work to do, that second cluster handles most of the spill over coming from the first cluster, and that the E cluster isn’t needed as much for spillover, and so it can be dedicated more towards handling the low priority work where latency doesn’t matter.