Some basic questions, since I don't know anything about this:

1) Games (and scientific visualizations): What's the story with TBDR? From what I've gathered (which may be wrong), (a) if you're writing a AAA-class game and want it to be maximally optimized to run on AS, you need to make use of this; and (b) other platforms don't use this, so writing games in a way that takes advantage of TBDR is a barrier in terms of developer expertise. Or is it the case that, if you're writing in Metal, using TBDR is simply a natural part of that? When Capcom made the new RE game for MacOS, did they likely make extensive use of TBDR?

What follows is an attempt of a detailed breakdown, but first a short TL;DR: TBDR is always on and all applications benefit for it; applications running on a TBDR GPU can be further optimised by opting in to use some TBDR-specific APIs, some of which are trivial to use and can offer big wins, some of which require significant redesigns while potentially offering much bigger wins. You choose how far to go down this rabbit hole as a developer. Does Capcom utilise all the features of Apple GPUs? Only they and Apple know

And now in more detail:

TBDR is a specific way to do primitive rasterization and shading. It consists of two parts: tile-based (TB) rasterization and deferred rendering/shading (DR). In more detail:

Tile-based (TB) rasterization: the screen is split into small tiles (usually 32x32 pixels), the rendered primitives are sorted by the tile they intersect and the processing is done per tile rather than per whole primitive. TB is a memory bandwidth optimisation technique. Since you know that the processing is limited to a specific small area of the screen, you can do all the work using fast on-chip memory, which saves you roundtrips to the video memory. You only need to save the final result (the drawn tile) to the video memory, which is much more efficient than sending back and forth individual pixel data, especially if you have many overlapping primitives etc. And since pixels you are processing are guaranteed to be close to each other, it is also more likely that the texture data you have to fetch will share cache lines, further improving memory bandwidth efficiency.

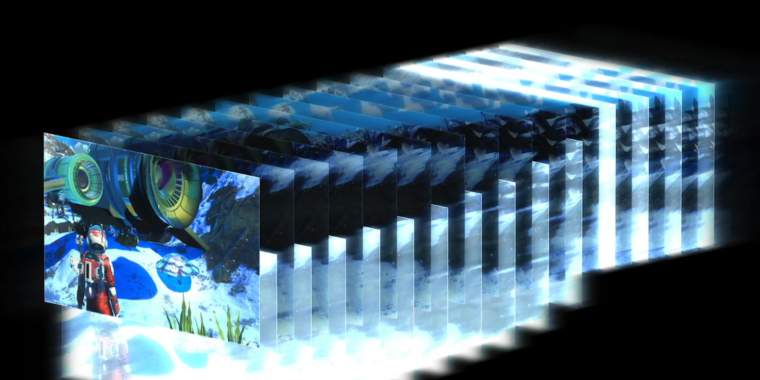

Deferred rendering/shading (DR): the pixel shader is only run after all the primitives have been rasterised. This improves shader core utilisation. First, you only have to shade pixels — shading is done after the pixel visibility has been fully determined (this is why they say that TBDR has perfect hidden surface removal). Second, with DR you shade the entire tile at once, that is, the shader is run on the full tile of 32x32 pixels, which is much more efficient than dispatching shader work for pixels of individual primitives, especially if the primitives are small (you lose SIMD efficiency around the primitive edges).

Virtually all mobile rasterisers do TB rasterization, since it's easy to do and bring very obvious benefits on devices with constrained memory bandwidth. Even more, as TB approaches massively improve cache utilisation, modern desktop GPUs also adopted some tile-based methods — that is the secret for improved power efficiency of Nvidia Maxwell and later for example. Almost nobody does deferred shading part however, since that is the notoriously difficult part of the story. The only company who managed to successfully develop DR technology is Imagination and Apple got it from them and further refined it. That's why Apple is in a rather unique position that their GPUs are "true" TBDR while other mobile GPUs are just "TB".

So, now that we have stablished the terminology, we can get to your question

Any application that does GPU-driven rasterization, no matter the API they use, is going to benefit from TBDR on Apple GPUs, simply because that's how Apple GPUs operate. But it is possible to get more performance/efficiency by explicitly taking advantage of the architecture of these GPUs.

The first, low-hanging fruit is to provide the GPU with some hints as to how the data is used. Recall that a TB GPU operates on small tiles. The data for these tiles have to be fetched from the frame buffer (in the video RAM) and then written back, which obviously requires memory bandwidth. But quite often, you can skip these steps. For example, if you start your drawing from a clean slate, you don't have to fetch the tile contents from the frame buffer. Or, if you are not using the depth buffer for any further post processing (like ambient occlusion), you don't have to save it's data in the system memory at all. By telling the API which components of the frame buffer you will use — and how — a TB GPU can dramatically reduce the memory transfers and thus improve efficiency. Such APIs are not exclusive to Apple — although Apple was the first to come up with them — they will benefit any modern mobile GPU and that's why it is supported everywhere from DX12 to Vulkan (and obviously, in Metal). The only problem is that developers are often not too familiar with them as they are used to desktop GPUs where such hints are meaningless, so they often don't set them up properly. Regardless, doing this is very simple and any bugs can be fixed quickly, so it's the super easy part of making things run better on tile-based renderers.

The other optimisations step, which is much more intricate and limited to Apple GPUs only, is to use the special capabilities Apple exposes in Metal. These capabilities fully expose the nature of the TBDR GPU and allow you to do very complex per-tile processing without every touching the slow video memory. Apple allows you to freely mix pixel and compute shaders and store complex data structures in the on-chip tile memory, which can allow you to implement many complex rendering algorithms in a much simpler and way way more effective fashion. Examples include advanced per-pixel shading, which has traditionally been an expensive technique to use. With a "regular renderer", you have to deal with lights for every pixel. With Apple's TBDR extensions, you can use a compute shader to gather all the lights that can affect pixels in a tile (this is much cheaper to do for a tile than for individual pixels) and then light all pixels in the tile at once. These are really cool features and easily my favourite part of Metal and Apple Silicon, but they require you to rethink your algorithms and approaches and therefore are a less likely target for a straightforward port.

2) ML: IIUC, NVIDIA is the dominant player in high-end ML for three reasons: performance (which means both the software tools [CUDA, including cuDNN] and the hardware), easy of use (a lot of ML users are scientists rather than programmers, and most find CUDA pretty accessible), and the size of the community/knowledge base. That doesn't seem to be Apple's market (e.g., their GPU's are still single-precision). But how much of a place is there for AS in ML at the lower end? It seems that, even at the lower end, for someone that wants to do anything more than occasional ML, the path of least resistance is to get an NVIDIA box. How accessible is Metal for doing ML as compared with coding for CUDA?

I think there are two parts to this. First, almost nobody uses GPU APIs like CUDA or Metal directly to do ML. People use an ML framework such as Tensorflow or PyTorch. That's the beauty of the it all. You can develop and test your ML model on your ultracompact Mac laptop and then push the same model to a supercomputer for the real training. These frameworks abstract away the APIs and the GPUs they run at. Nvidia GPUs are still faster of course. The goal is not to beat Nvidia here, the goal is to make working with these frameworks on a Mac just fast and convenient enough so that other benefits of the Mac (efficiency, weight, ergonomy, convenience) will push data folks to choose a Mac laptop. And who knows what the future will bring. Maybe next-gen Apple prosumer chips will come with a much more capable ML accelerator and you'd get a huge speedup for your existing code.

Second part is to use the GPU APIs to implement ML directly. As I said, this is rarely done, mostly when you have some very exotic needs or you already know what your model is and just want to integrate it into your application/optimize the hell out of it. For the later part, Apple does have the efficient NPU that's optimised for low-power ML inference for applications. But that is not something data scientists are interested in.

3) Is Metal 3 fully supported on every Mac that can run Ventura, or are certain features available only on AS?

It's supported on all newer Intel and AMD GPUs (Iris Plus and Vega and up) — so you get things like raytracing etc. on all of them. Some features (like all the TBDR extensions etc.) are obviously Apple Silicon only.